Using Containers to Package AI Applications on Jetson: Solving JetPack Dependency and Deployment Challenges

NVIDIA officially adopted an Ubuntu 22.04-based environment in JetPack 6.x (6.0 / 6.2), corresponding to the L4T 36.x series. This is a major upgrade for developers. However, challenges remain—AI application dependencies are still fragmented across different JetPack minor versions.

How to use Docker container technology to package AI models and applications for fast, stable, and version-agnostic deployment on JetPack 6.0 and 6.2 environments.

Dependency Challenges in JetPack 6.x

JetPack 6.0 vs. 6.2 :

| JetPack | L4T | Ubuntu | Kernel Driver/Library |

|---|---|---|---|

| 6.0 | 36.3.0 | 22.04 | CUDA 12.2, TensorRT 8.6 |

| 6.2 | 36.4.3 | 22.04 | CUDA 12.6, TensorRT 10.3 |

Although both are based on Ubuntu 22.04, there are still differences in GPU drivers, CUDA Toolkit, and TensorRT engine versions between the two, leading to the following issues:

-

A TensorRT engine compiled on JetPack 6.0 may not run correctly on JetPack 6.2

-

The PyTorch GPU version must match specific CUDA and driver versions, making cross-version compatibility error-prone

-

It becomes difficult to maintain consistent deployment of the same inference application across multiple devices

Benefits of Containerization

Decoupling JetPack Dependencies

Using Docker containers to package the AI runtime environment (including CUDA, cuDNN, TensorRT, PyTorch, etc.) allows all dependency versions to be locked inside the container, avoiding constraints from the underlying JetPack version.

The same container can run on both JetPack 6.0 and 6.2.

By using NVIDIA’s official L4T container base image, such as dustynv/pytorch:2.1-r36.2.0, compatibility with L4T 36.2 is ensured. Even when JetPack is upgraded in the future, deployment can continue as long as the GPU driver remains compatible.

How To

Docker Runtime

If you need to use the GPU inside a container (e.g., to make the NVCC compiler and GPU available during the docker build process), it is recommended to set the default Docker runtime to nvidia. This ensures that GPU resources are properly accessible during both the build and runtime phases of the container.

Please follow the steps below:

- Install the nvidia-container

sudo apt update

sudo apt install -y nvidia-container curl

curl https://get.docker.com | sh && sudo systemctl --now enable docker

sudo nvidia-ctk runtime configure --runtime=docker

- Restart Docker Service and add User in docker group

sudo systemctl restart docker

sudo usermod -aG docker $USER

newgrp docker

- Add Docker Configuration

Create the /etc/docker/daemon.json and add below content:

{

"runtimes": {

"nvidia": {

"path": "nvidia-container-runtime",

"runtimeArgs": []

}

},

"default-runtime": "nvidia"

}

- Restart Docker

sudo systemctl restart docker

NVIDIA provides CUDA Containers for Jetson Orin

Install the Jetson-containers

cd $HOME

git clone https://github.com/dusty-nv/jetson-containers

bash jetson-containers/install.sh

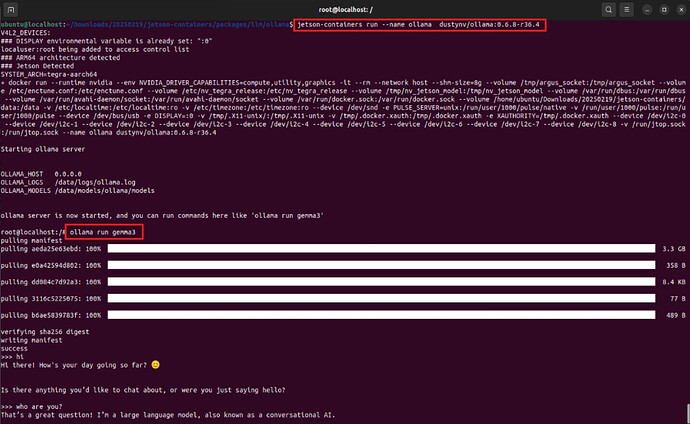

GenAI Example:Ollama Infrence

- Download Docker Image

docker pull dustynv/ollama:0.6.8-r36.4

- Run Container:

jetson-containers run dustynv/ollama:0.6.8-r36.4

- Run Ollama with gemma3 Model:

ollama run gemma3